Pretend job seekers use AI to interview Distant jobs, say Tech -CEOS

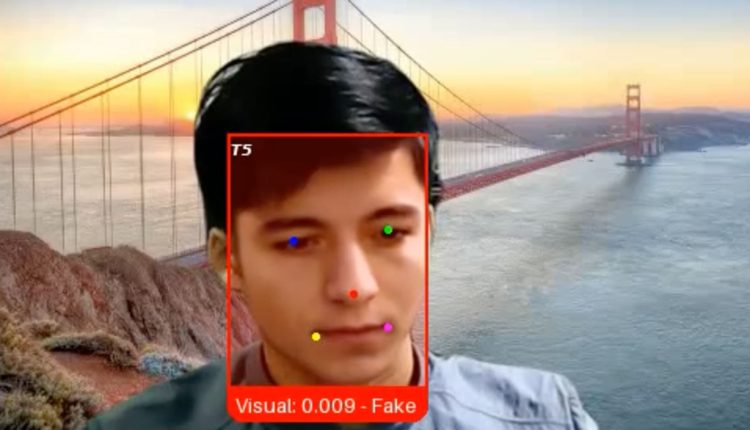

A picture of Pindrop Security shows a fake job candidate who referred to the company as “Ivan X”, a fraudster who uses the Deepfake Ai technology to mask the face, says CEO from Pindrop, CEO Vijay Balasubramaniyan.

With kind permission: Pindrop security

As a Pindrop Security Startup Service Authentication, a recent opening of jobs published, a candidate of hundreds of others stabbed.

The applicant, a Russian encoder named Ivan, seemed to have the right qualifications for the senior engineering role. However, when he was interviewed the video last month, Pindrop's recruiters noted that Ivan's facial expressions were slightly except for synchronization with his words.

This is because the candidate, whom the company has known as “Ivan X”, was a fraudster who used Deepfake software and other generative AI tools to be hired by the technology company, said Pindrop CEO and co-founder Vijay Balasubramaniyan.

“Gen AI has the border between what it means to be human, and what it means to be a machine,” said Balasubramaniyan. “We see that individuals use these fake identities and fake faces and fake voices to secure employment, and sometimes even as far as a facial exchange with another person who appears for the job.”

Companies have long held attacks by hackers in the hope of taking advantage of weaknesses in their software, employees or providers. Now another threat has arisen: professional candidates who are not that they are, KI tools lead to the production of photo -IDs, generate employment stories and provide answers during the interviews.

The increase in profiles of AI generated means that according to research and consulting companies Gartner, 1 out of 4 applicants will be fake worldwide by 2028.

The risk of a company to bring a fake job seeker can vary depending on the intentions of the person. After the setting, the fraudster can install malware to demand ransom from a company or to steal its customer data, trade secrets or funds, says Balasubramaniyan. In many cases, the fraudulent employees simply collect a salary that they would otherwise not be able, he said.

'Massive' increase

Cyber security and cryptocurrency companies have recently recorded an increase in fake job seekers, said industry expert CNBC. Since the companies often hire roles, they present valuable destinations for bad actors, these people said.

Ben Sesser, the CEO of Brighthire, said that a year ago he heard of the edition for the first time and that the number of fraudulent applicants rose “massive” this year. His company helps more than 300 corporate customers to assess potential employees of finance, technology and healthcare in video interviews.

“People are generally the weak connection in cyber security, and the attitude process is a man of human process with many transfers and many different people,” said Sesser. “It has become a weak point that people try to expose.”

However, the problem is not limited to the Tech industry. More than 300 US companies accidentally hired fraudsters with connections to North Korea for IT work, including a large national television station, a defense manufacturer, a car manufacturer and other Fortune -500 companies that claimed Ministry of Justice in May.

The workers used stolen American identities to apply for Remote jobs and hired remote networks and other techniques to mask their true locations, said the Doj. Ultimately, they sent millions of dollars to Löhne to North Korea to finance the country's weapons program, the Ministry of Justice claimed.

In this case, a small part of what the US authorities said was unveiled a extensive network of thousands of IT workers with North Korean relationships. Since then, the doj has submitted more cases with North Korean IT workers.

A growth industry

Failed job seekers are not allowed if the experience of Lili Infante, founder and managing director of Cat Labs, is a hint. Her startup based in Florida sits at the interface between cyber security and cryptocurrency and makes it particularly tempting for bad actors.

“Every time we list a job advertisement, we receive 100 North Korean spies that apply for it,” said Infante. “If you look at your résumés, you look fantastic. You use all key words for what we are looking for.”

According to the Infante, your company is based on an identity verification company to suspend fake candidates, part of an emerging sector that includes companies such as Idnfy, Jumio and Socure.

An FBI wanted to show poster suspects that the IT worker from North Korea, which is officially referred to the Democratic People's Republic of Korea, are.

Source: FBI

The fake employee industry has expanded beyond the North Koreans in recent years to include criminal groups in Russia, China, Malaysia and South Korea, according to Roger Grimes, an experienced consultant for computer security.

Ironically, some of these fraudulent workers in most companies would be viewed as first -class actors, he said.

“Sometimes they make the role bad, and then sometimes they play it so well that I actually said a few people that they are sorry that they had to let them go,” said Grimes.

His employer, the cyber security company Knowbe4, said in October that it accidentally hired North Korean software engineer.

The worker changed AI to change a stock photo, combined with a valid but stolen US identity, and background reviews, including four video interviews, said the company. He was only discovered after the company found suspicious activities from its account.

Fight Tieffakes

Despite the DOJ case and some other public incidents, managers in most companies do not know the risks of fake candidates of the applicant in general, according to the Brighthire Sesser.

“You are responsible for the talent strategy and other important things, but at the forefront of security it was not one of them historically,” he said. “People think they don't experience it, but I think it's probably more likely that they just don't realize that it is so.”

Since the quality of the deep paw technology improves, the problem becomes more difficult to avoid, said Sesser.

As far as “Ivan X” is concerned, Pindrops Balasubramaniyan from Pindrop said the startup used a new video authentication program that he created to confirm that he was a deep paw fraud.

While Ivan claimed to be in western Ukraine, his IP address indicated that he was actually in a possible Russian military facility near the North Korean border from thousands of miles in the east.

Pindrop, supported by Andreessen Horowitz and Citi Ventures, was founded more than a decade ago to recognize fraud in language interactions. Customers include some of the largest US banks, insurers and health companies.

“We are no longer able to trust our eyes and ears,” said Balasubramaniyan. “Without technology, you are worse than a monkey with a random coin tunnel.”

Comments are closed.